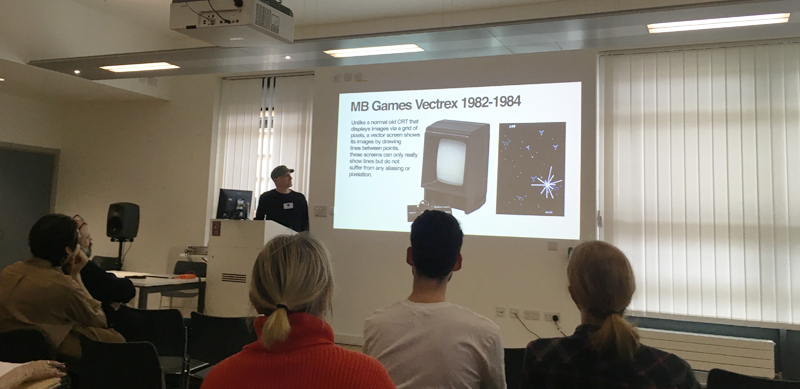

Seeing Sound is a biannual symposium on audiovisual art, which took place at Bath Spa University in March. This year two EMuTeLab members were presenting their work:

Andrew Duff: I don’t think anyone has been electrocuted yet: Sharing info online to modify an old games console for vector oscillographics

Abstract: Since sharing a pdf online about how to modify a Vectrex (an early 1980s vector-based games console) to directly take in three audio signals to draw directly on the screen as one would with an oscilloscope, I have unwittingly become a ‘go-to guy’ with regards to entering the world of vector oscillographics. I have since been invited to talk at various electronic music events and am regularly contacted with requests for support, information and recommendations on appropriate modules. All the while the games console itself becomes harder to find on the second-hand market as they are perhaps becoming something of a cult artefact sought after by both gamers and electronic artists/musicians alike.

The Vectrex offers a unique visual experience rarely found in standard oscilloscopes; it utilises three inputs for x, y and z control of the electron beam, is monochromatic, and the screen is relatively large. It does, however have its own idiosyncrasies, which makes for an interesting tool to work and perform with.

This talk will present a brief contextual history of experiments in this field with some examples of influential oscillographic artists such as Ben Laposky & Herbert W. Franke, followed by an overview of the impact sharing this pdf has had with examples of work by a range of current artists, experimentalists and performers.

Andrew’s talk was part of a wider Vectrex theme at Seeing Sound, with other presentations from Derek Holzer and Douglas Nunn. You can find his slides on his website http://users.sussex.ac.uk/~ad207/adweb/assets/seeingsound2018slides.pdf

Chris Kiefer: 10K Video

10000 neural oscillators conspire to make a bad approximation of a one second video loop, while their internal networks are stretched and twisted by their own sound and their neighbours.

This is an improvised performance, where a video is resynthesised by an ensemble of neural conceptor networks, each of which has learnt (with varying degrees of success) to approximate the behaviour of an individual pixel in the original. The ghostly result is manipulated live, to send this mass of miniature feedback systems into collective overdrive.

The neural networks used in this piece are complex dynamical systems which need self-sustaining energy to continue their role; they follow a trajectory, but this trajectory can be manipulated and influenced, both between networks and from global forces; these networks can be damped, overdriven, distorted, leaked, excited, surprised, saturated and revitalised. When revitalised in synchrony they can play a rendition of the original video loop. When manipulated, this video can be distorted and effected in many directions. During the piece, the host of networks are continually revitalised, and then manipulated along infinity varying trajectories towards noise or destruction.

Chris performed this new piece live. You can see a generative version that runs in your web browser at http://facingaway.luuma.net/ (fairly decent GPU needed)